Today’s post was authored by Dimitar Antov, a Ph.D. economist, consultant, and analytics leader who specializes in applying empirical analysis, Big Data, and advanced analytics to highly complex business problems and strategy implementations. Contact BTG to start a project with Dimitar or other highly skilled independent talent.

Determining the appropriate level of pricing is an integral part of any effective and comprehensive go-to-market strategy. How do you select the optimal level of pricing? This is one of the most important questions to tackle primarily because the pricing you choose will have an impact on nearly every other decision as you formulate your go-to-market strategy. Below, I offer a methodological perspective on different approaches you can use to determine your pricing strategy. I will also make a clear distinction between price changes and value delivered within a framework that ties in the benefits to the consumer.

Bain & Company research established that 18% of companies have no internal capabilities and processes for their pricing decisions. It turns out that a large fraction of managers are not relying on analytics but rather on subjective factors such as historic use cases and their own gut feelings. Could these managers do better by integrating analytics and leveraging available data assets? Yes! In fact, I suspect that few would argue that data-driven price setting mechanisms are superior and the north-star for companies that wish to thrive in the marketplace—but implementing such systems can be a weighty task. To help you reduce your reliance on subjective factors, here we will cover four approaches that increase the relevance of data, analytic insights, and statistics in your pricing strategies.

Setting a price too high or too low can have negative consequences. If price levels are too low, profits could be non-existent or even negative. Unless cross subsiding another offering in the portfolio (the way Costco does with its rotisserie chicken offering), or seeking to reap some medium to long-term pay-offs such as customer loyalty (the hallmark approach of Amazon in its early days of establishing itself as market leader in the online retail space), being a loss leader rarely makes sense as a long-term strategy. When price levels are set too high, sales will stagnate and competitors will take over, again leaving profits non-existent. Economic theory tells us that an ideal price balances competitive forces and customers’ utility from consumption. Wouldn’t it be nice if we had a simple framework to use and apply such a theory? Without one, how can we even hope to determine the optimal price levels?

A Framework for Thinking About Pricing Strategy

Value=Benefits/Price is a framework that allows you to derive price as a function of the value offered to consumers and the benefits they received from consumption. If price is too low and benefits are held constant, value would likely be higher than other offerings in the marketplace. This high value would cause demand to surge as customers try to take advantage of the situation. In trying to meet such a spike in demand, providers of the products are likely to run into capacity constraints. If price is too high, and benefits are held constant, value would likely be lower than consumers’ willingness to pay—translating into dissipated demand that in turn would be picked up by competitors with lower prices. The challenge is to accurately estimate the appropriate level of price since neither benefits nor value are directly observed. What can we do to accomplish this?

One way of trying to estimate benefits and value is to use an analytics toolkit. These tools allow for a data-driven and disciplined approach to setting prices in a way that maximizes profit and minimizes the chance of expensive errors. Many pricing analyses try to avoid more complex analysis by relying directly on observed historical data. It is important to recognize the limitations of this approach. Specifically, historical data only lets you evaluate price levels you have observed in the past. Anything outside of the low or high end of the price range cannot be evaluated through historical data. Imagine applying your historical data to address the dynamics of COVID-19 pandemic world!

You could overcome some of the limitations of historical analysis with primary research data generated by interviews where you ask purchasers for information on their shopping decisions, but primary research comes with its own weak spots. It runs into problems that arise when consumers self-report on their thoughts and behaviors (more on this below). Regardless of how much an organization relies on historical data or “listens” to the consumer, it should remain agile in how it sets prices. No price should be set in stone. Instead, a sensible pricing strategy will account for changes in market conditions and allow for iterating through different price levels based on analytic outputs. Price is what customers are paying but it is value that managers can create by varying the pricing up or down. The effect that price has on sales provides a very strong indicator of whether value is appropriately balanced with benefits offered.

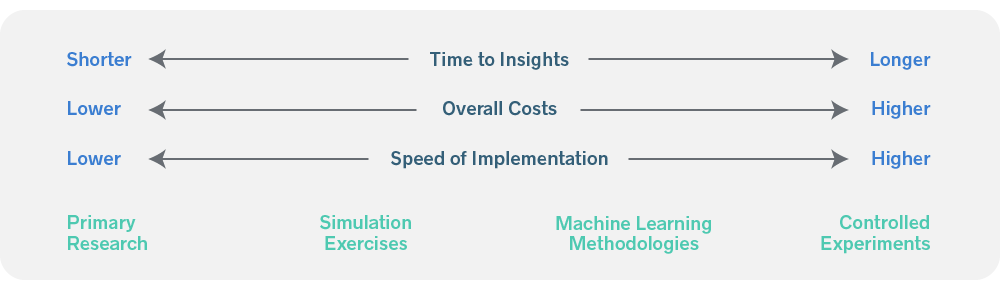

There are four distinct approaches to setting up a robust pricing architecture. They are not mutually exclusive and can often be used in combination with one another to provide the best results.

- The first approach is to conduct primary research and ask consumers directly about the right level of price. Fielding shopper surveys and collecting data on representative samples allows managers to run some advanced analytics.

- The second approach involves carrying out simulation exercises where you simulate multiple price levels to evaluate their impact on buy rates and estimate demand. Conjoint studies are hallmarks for simulation approaches in market research and are especially relevant for new product introductions where historical data is unavailable.

- The third approach relies on collecting point-of-sale data, market, geography, and consumer characteristics and leverages machine learning methodologies to estimate the right level of price.

- Finally, the fourth approach employs the design and application of controlled experiments, e.g., A/B tests, for quick results in the field that can be rolled up more broadly after having gained confidence in the outcomes from initial test.

Prior to selecting a price-setting approach, it is important to recognize and consider the key benefits and weaknesses of each of these four alternatives. None offer a silver bullet in picking your optimal pricing, but depending on your ability to capture and measure value and benefits, each of the four alternatives could be more or less suitable to identify your optimal price levels.

Distinguishing Characteristics of Each Approach to Pricing Strategy

Primary Research

Qualitative and quantitative insights derived through primary research—such as focus groups and consumer surveys—can provide rich information on how consumers are interacting with the category and your product specifically. You might ask consumers directly how prices affect purchase decisions and establish the impact of price hikes or reductions on demand. While primary research can be helpful, managers should be careful when relying on the stated importance for price levels given the tendency for consumers to overstate their preference for low prices. Instead of relying on stated importance, managers can leverage statistical techniques to determine the derived importance of price charges on the value received during a purchase decision. In this way, analytics can turn qualitative insights into quantitative ones for effective pricing recommendations.

Simulation Exercises

With simulation exercises, managers can field short surveys and utilize conjoint simulation techniques to discover customers’ revealed preferences on a variety of price levels and features. These approaches allow for the testing of numerous product attributes and shopping alternatives. The main limitation of simulation approaches lie in the strong assumptions of consumer preferences and their inability to capture the wide variation in shopping environments. Despite these limitations, simulations are a quick and powerful way of estimating customers’ preferences and the impact of those preferences on profits.

Machine Learning Methodologies

Machine learning methods that estimate optimal price levels utilize various statistical and modeling techniques, the most common of which are regression-based models. Relying on historical sales data allows managers to conduct competitive assessment and benchmarking. In addition, they could test for how variation in the macroeconomy and other uncontrollable factors influence consumption and interact with price levels to affect value delivered to consumers. The issue with these approaches in general is that they rely on untestable assumptions of model form and do not accurately size up top-line growth.

Controlled Experiments

Controlled experiments allow for numerous quick iterations and capture the wide variation in shopping environments. They can be agile in testing and are associated with high speeds of implementation. A test is set up by splitting the population on two different subsamples, one called the “test” and the other “the control”. The test group is selected such that it remains identical to the control in terms of market conditions, consumers, representative geographies, etc. The only exception in difference between the test and control groups lies in price levels. In the control, price is held constant, but in the test group different price levels are tested to estimate the effect it has on sales. One needs to be aware that techniques such as controlled experiments require broader departmental alignment and coordination, and are likely more expensive.

Often, rather than relying on a single approach to set optimal price levels, you would combine approaches to devise a robust pricing strategy. In some instances, you may use all four approaches together. Primary research is an ideal place to start to formulate your hypothesis in direct exchanges with consumers via focus groups, for example. Then, you could proceed with collecting survey data to supplement store-level sales data in various machine learning modeling applications. After results are evaluated and the pricing dynamics are well understood, you could proceed with test-and-control experiments in the field for quick confirmation of strategy prior to broader national roll-outs. Irrespective of the combination of methods you employ, a pricing framework that leverages data assets and analytic techniques is preferable to employ when determining optimal levels for prices charged and the value delivered in the marketplace.

Get the Skills You Need

Thousands of independent consultants, subject matter experts, project managers, and interim executives are ready to help address your biggest business opportunities.

About the Author

Follow on Linkedin Visit Website More Content by Dimitar Antov